Anthropic Vs. DoD

While Anthropic and the Pentagon argue over contracts, the real opportunity belongs to those who know where their hunter-gatherer brain ends and AGI begins

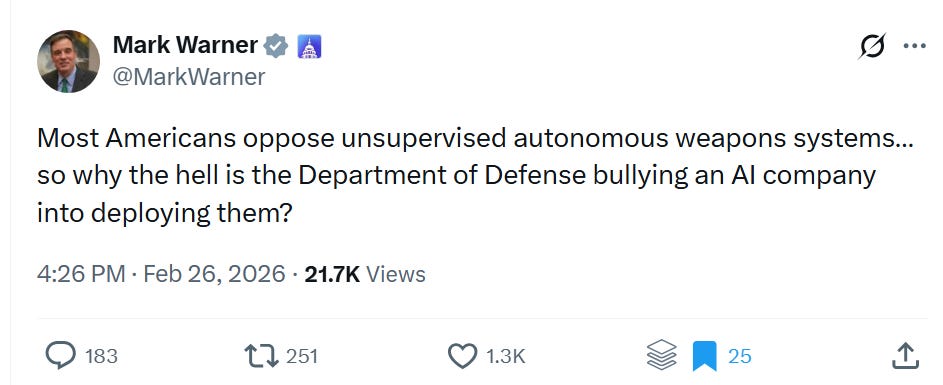

Senator Mark Warner posted a tweet last night that got a lot of engagement. “Most Americans oppose unsupervised autonomous weapons systems,” he wrote. “So why the hell is the Department of Defense bullying an AI company into deploying them?”

Twenty thousand views. Twelve hundred likes. The replies split predictably between people who trust the military and people who don’t, both sides operating from the same Hollywood mental model, the one where defense engineers are either reckless cowboys chasing contracts or cold bureaucrats indifferent to consequences.

I want to replace that mental model with a real one. Not because Warner’s politics bother me, but because the mental model he is feeding is expensive. It costs us clarity at exactly the moment we need it most. And if you are trying to understand where value gets created as artificial intelligence matures, operating from a bad map is a fatal error.

The Pentagon is decades past unsupervised autonomous weapons systems.

I was at McDonnell Douglas in 1989. What I watched being built there changes everything about how you should read this debate.

What We Were Already Doing in 1989

When I joined McDonnell Douglas as a young engineer, part of my technical education involved learning LISP and Prolog. Those are not languages most people have heard of today. In 1989 they were the two primary tools for building expert systems, symbolic AI that could encode human reasoning into software and execute it faster than any human could process the same information.

A Note to regular St. Louis readers: Bob Lozano was my instructor at WashU in 1989, teaching me LISP and Prolog. 10 years later, I was an early investor in his startup that pioneered fabric compute, the first attempt to commercialize what ultimately became AWS-like infrastructure. At McDonnell, we had built early forms of fabric compute in 1992 to run in-silico models for computational fluid dynamics and finite element analysis at night across 1000 HP 720 workstations. This eliminated, for the first time in aircraft development, the need to build physical destruct articles (500M-800M in cost). The ultimate correct compute architecture, though, was a combination of software and hardware, well beyond RISC. NVIDIA and AWS ultimately won that race. Jim Simon was working in parallel at Renaissance Technology, doing very similar work to develop quant investing.

In terms of AI we were not building toys. We were building weapons solutions for the F/A-18.

The system worked like this. The aircraft’s sensors gathered threat data continuously. The expert system assessed the threat environment, identified the optimal weapons response, and surfaced a recommendation to the pilot. The pilot did not click through menus or navigate options under stress at five hundred miles an hour. He accepted or rejected a plan with a single input, hands on throttle and stick, HOTAS, exactly the way a developer today accepts or rejects a Claude CLI plan before executing code. The tech behind this is still novel today, and I left a lot out of my description.

The human was in the loop. The cognitive compression was handled by the machine. And this was production software on a front-line fighter aircraft thirty-six years ago.

Phalanx had been doing something even more autonomous since 1980. The close-in weapons system detects an incoming missile, tracks it, and engages it without any human input at all. The threat envelope is too compressed for human reaction time. A human in that loop would mean a dead ship. Nobody called this a civil liberties crisis for four decades because the system was solving a physics problem, not a political one.

Frankly, when a Marine rifleman shoots at a threat, is that cognition or 1000s of hours of training programmed into muscle memory?

When Senator Warner tweets about autonomous weapons as if they arrived last Tuesday, he is describing a system architecture that has been operational on US Navy ships since Jimmy Carter was President.

The Lineage of AI is more Obscure

AI is not new.

Claude Shannon published his foundational work on information theory in 1948. Shannon was at Bell Labs, but the military was among the primary funders and consumers of that intellectual tradition. The core insight, that information can be quantified, that signal can be extracted from noise with mathematical precision, was immediately recognized as a doctrine for warfare. If information superiority creates force multiplication, and it does, then Shannon’s mathematics was a weapons system before it was anything else.

The United States military absorbed these principles and built network-centric warfare around them decades before any commercial enterprise understood what information superiority meant as an operational concept.

John Nash developed his equilibrium theory under RAND Corporation grants in the early 1950s. RAND existed to solve military problems. The military needed formal models for adversarial decision-making, for war games where each side’s optimal strategy depends on what the other side does. Nash gave them the mathematics. What we now call game theory was built to model conflict before it modeled anything else.

Jim Simons was dismissed from his NSA codebreaking post in 1968 after publishing a New York Times letter arguing America should invest its intellectual capital in innovation rather than war, then did exactly that, applying defense-funded mathematics to financial markets. Building Renaissance Technologies the most successful quantitative fund ever created. The market is just another information environment. The insight wasn’t new. The application domain was.

Anthropic named their AI system Claude. After Claude Shannon. The training architectures that make Claude possible use generative adversarial networks, which are Nash equilibria by construction. Reinforcement learning from human feedback descends from reward modeling that carries the same adversarial optimization logic Nash formalized for RAND.

The military funded the mathematics. Absorbed it into doctrine. Ran it as a competitive advantage for decades. And now an AI company named after the mathematician whose work the Pentagon operationalized in the 1950s is in a public contract dispute with that same institution over whether their terms of service permit the use of AI in weapons systems.

One might reasonably ask whether Anthropic has the military’s permission to commercialize its own intellectual descendants.

WarGames made WOPR the villain in 1983. Joshua plays tic-tac-toe to learn that nuclear war is unwinnable, and the movie treats that as a near-miss catastrophe caused by reckless military automation. What the movie didn’t tell you is that RAND had already formalized that exact logic twenty years earlier under defense contracts. Our nuclear response dynamic, all classified, derives from that game theory. The military wasn’t learning the lesson from Hollywood. Hollywood was dramatizing, badly, what defense strategists had already internalized decades before. The student lecturing the teacher about the dangers of the classroom.

Creative Destruction Applied to Violence

The Hollywood version of American military history runs roughly like this. Overwhelming force. Massive footprint. Collateral damage accepted as a cost of doing business. Dresden. Hiroshima. Vietnam.

The operational reality of the last forty years runs in the opposite direction.

The firebombing of Dresden in 1945 required over a thousand aircraft and killed somewhere between twenty-two thousand and twenty-five thousand people. It destroyed a city. In 2011, US special operations forces located, extracted, and killed Osama bin Laden in a seventy-nine-person operation that lasted thirty-eight minutes. No declaration of war. No collateral damage reported. Precise force applied to a precise target.

That trajectory is one of the most dramatic examples of creative destruction in modern institutional history, and almost nobody frames it that way. Information density replacing physical mass. Precision replacing scale. The same economic force that allowed Amazon to destroy brick-and-mortar retail with better logistics applied to the projection of force.

The Hellfire R9X carries no explosive warhead. Six blades deploy on impact. It is designed to kill one person in a confined space without the pressure wave that kills everyone around them. Passengers in the same vehicle have survived strikes. The precision is that exact. This is the opposite of a weapon of mass destruction. It is a weapon of mass reduction, minimizing harm to the point where a single human being can be removed from a single seat of a single car from ten thousand feet.

The people who built this capability did not do it because they are indifferent to civilian casualties. They did it because reducing civilian casualties was the engineering objective. Military doctrine. All signal. No noise. Precision is not a moral accident. It is a design requirement.

The defense establishment has been the most aggressive adopter of deflationary precision technology on earth. The footprint gets smaller. The accuracy gets higher. The collateral damage goes down. That is the forty-year arc. Warner’s tweet pretends it runs the other direction.

Where Failure Actually Comes From

There is a version of the Boeing 737 MAX story that gets told as a parable about corporate indifference to safety. Engineers cutting corners. Profits over lives. It is wrong in the ways that matter.

The engineers who flagged MCAS were overrun. The problem wasn’t engineering judgment. It was that engineering judgment was removed from the decision. The choice not to build a clean-sheet replacement aircraft because the capital cost and certification timeline were inconvenient for shareholders meant solving an aerodynamic problem caused by a repositioned engine through software rather than geometry. MCAS was an attempt to compensate for a human factors problem through autonomous correction. The concept was not wrong. The sensor redundancy was inadequate, and when that was raised, the people raising it were downstream of a financial decision that had already been made above them. In some ways, a decision needing a 1M token context window, where an average human runs 20k tokens.

Meanwhile, the customers filling those aircraft with pilots who had a hundred hours of flight time instead of eight thousand. The emerging market airlines standing up fleets with fresh crews who had never gained the muscle memory of flying military aircraft, were introducing a human failure mode that more sophisticated autonomy would have caught. The accident chain required both failures simultaneously. A better autonomous system might have broken it.

The lesson is not that autonomy is dangerous. The lesson is that when people optimizing for financial or political outcomes override those optimizing for system integrity, complex engineered systems fail. The engineers who fly on the planes they design have the right incentive structure. The executives who don’t fly commercial have a different one. Small context windows.

HAL 9000 is the cultural reference point everyone invokes when thinking of AI gone wrong. It is worth remembering that HAL didn’t malfunction. HAL was given a contradictory mission by administrators who concealed information from the crew. The AI executed its instructions. The failure was in the humans who wrote the mission parameters, the people above the system, optimizing for a political outcome rather than a coherent one.

The risk in autonomous weapons is not the engineers at Raytheon or the career officers at CENTCOM. The risk is a civilian chain of command that wants systems capable of lethal action precisely because they don’t push back in the situation room. Generals provide friction. They ask about rules of engagement, escalation pathways, second-order effects. An autonomous system directed to skip that friction is a capability with enormous political appeal to exactly the wrong kind of decision-maker.

TIA, the Total Information Awareness program after 9/11, was built for narrow defense purposes.

In a twist of history, TIA was built by Admiral Poindexter, ex-post Iran-Contra, and later evolved Robin Hanson’s work on prediction markets to build DARPA’s future map, which ultimately became PolyMarket. It is one architectural thread of network centric warfare pioneered by Tony Tether at DARPA in the late 90s.

The Patriot Act expanded TIA into dangerous realms. Politicians ordered NSA and others to reveal information about political opponents. The surveillance apparatus that civil libertarians rightly worry about was not built by rogue engineers pursuing power. It was built by engineers following orders from civilian leadership that had decided the political benefits outweighed the constitutional costs. The defense community was the instrument. The civilian political structure, trying to win an election, was the author.

Warner’s tweet performs oversight theater directed at the engineering community while the actual failure mode, political actors directing autonomous capabilities toward domestic or politically convenient targets, goes unaddressed because naming it would require naming specific politicians rather than a convenient institutional villain.

The Classified Room

Serious risk analysis requires two inputs: probability of failure and consequence of failure. You need both to make good decisions.

Anthropic has the best current read on probability. They are closer than anyone to understanding how these systems behave at the edge of their training distribution, where emergent behaviors appear, how close current architectures are to something that surprises their designers. That knowledge lives inside their safety research and their operational data. It is not in any public document.

The Department of Defense has the most complete picture of consequences. What does a misaligned AI system do at operational scale in a combat theater? What does the cascade look like when a system receives contradictory inputs in an ambiguous threat environment? What are the second and third-order effects of an autonomous engagement decision that turns out to be wrong? That knowledge lives in classified operational experience and decades of war game modeling.

Neither institution has both inputs. Neither can do the risk analysis alone. It is a match of perfection that would extend defense and commercial value. A public contract dispute does not close that gap. It advertises it. Every tweet in this thread tells our adversaries exactly where the friction points are in the American AI development ecosystem. China and Russia are not pausing their autonomous weapons programs to read Senator Warner’s mentions. Anthropic’s concern is an unenforceable edge condition whose only value is theater.

The correct governance model is a classified collaboration framework where Anthropic’s safety researchers and DoD’s operational analysts work through the probability-consequence matrix together. Not because accountability doesn’t matter. Because accountability that is structurally incapable of accessing the actual information is theater, not oversight. The public debate about autonomous weapons is happening in a room with no windows.

The Sound Barrier

The specific debate about Anthropic and the Pentagon is a small window onto something much bigger.

Human scientists have spent centuries trying to decode the universe with a three-pound biological brain that evolved to find food and avoid predators. We are the instrument attempting to measure something far beyond our instrument’s range. Our cognitive ceiling is not a limitation we chose. It is the ceiling evolution built when the marginal return on additional brain complexity stopped justifying the metabolic cost.

We hit that ceiling tens of thousands of years ago.

Everything we have built since then, language, mathematics, institutions, markets, the entire architecture of civilization, has been an attempt to extend our individual cognitive range through collective coordination. Shannon’s information theory was an attempt to formalize what the brain does when it extracts signal from noise. Nash’s equilibrium was an attempt to model what happens when multiple bounded intelligences make decisions simultaneously. The F/A-18 expert system running on LISP and Prolog in 1989 was an attempt to offload the weapons-selection cognitive load so a human pilot could focus on staying alive.

The trajectory is one continuous line.

The question of what happens when we build a system that is not bounded by the same evolutionary ceiling is the most important question of this century. Artificial superintelligence, if we get there, will be the first entity in the known history of the universe capable of looking at the fabric of physical reality without our perceptual constraints. Our minds literally cannot perceive certain structures in quantum mechanics. Not because we haven’t tried hard enough. Because the instrument, our brain, is the limit.

Breaking that ceiling is the sound barrier of human evolution. Everything shakes at the approach. The instrument is at its design limit. What waits on the other side is different physics.

The defense community has been living with this question longer than anyone else. They were running the first expert systems on military hardware while the rest of the world was playing Pong. They absorbed Shannon when Silicon Valley was still an orange grove. They operationalized Nash before it appeared in an economics textbook. They have been asking, seriously and at classified depth, what happens when the system exceeds the operator’s comprehension, for four decades.

That is not the community Senator Warner’s tweet was describing.

The question for the investor is not whether this transition happens. It is whether your model of the world is built for the curve that is coming or the one that just passed.

Beyond the Hunter-Gatherer Brain

Venture capital has always been described as pattern recognition. The best investors have seen enough cycles that they can feel a dominant design forming before it becomes obvious to everyone else. The worst investors copy the last cycle and call it a thesis.

Both approaches share a flaw. They treat the innovation curve as something you read with intuition rather than something you measure.

The forty-year arc I have been describing is not intuition. It is calculus. The innovation curve in any sector has three measurable derivatives. The first is velocity, the rate at which new architectural experiments are entering the market. The second is acceleration, whether that rate is increasing or decreasing. The third is the inflection, the moment when latent demand activates at scale and a dominant design begins to emerge. Rebecca Henderson, who shaped my thinking on this at MIT, showed that organizational architecture is the gating function on the first derivative. Clayton Christensen showed that latent demand activation is the gating function on the third. The second derivative is where the investor who can read both forces simultaneously has an edge nobody else has.

This is what network-centric investing actually means. Not a portfolio of bets. A measurement infrastructure. Over ten years our fund has built direct relationships with over six thousand food and agriculture startups, twelve hundred farmers operating at the frontier of precision agriculture, and eighty portfolio companies converting experimental architectures into scale. That network is not a source of deal flow. It is a sensor array. It reads the first derivative in real time across a sector that represents the largest unsolved productivity gap in the American economy.

The sectors with the worst total factor productivity growth in Bureau of Labor Statistics data, healthcare, food, agriculture, have something in common with the military in 1945. They are information-poor environments where physical mass is still being substituted for intelligence. Dresden instead of the Hellfire R9X. The transition is not a question of whether. It is a question of when the second derivative turns positive and the third becomes visible.

The data we have accumulated over a decade, across startups, farmers, and portfolio companies building in parallel, is the foundation for reading that curve. Not predicting it. Reading it. The way Shannon read signal from noise. The way the F/A-18 expert system read the threat environment and surfaced a weapons solution before the pilot’s conscious mind could process the same information.

The biological brain that evolved to hunt and gather is the instrument and the limit simultaneously. It can see patterns in its own experience. It cannot see patterns across six thousand simultaneous experiments happening in parallel without a system that aggregates, weights, and surfaces signal from that complexity. That is what AI does to the investor’s cognitive load. It is the HOTAS system for capital allocation. The decision remains human. The compression is handled by the machine.

This is not a metaphor. The people building expert systems at McDonnell Douglas in 1989 were asking the same question we are asking now. How do you extend human judgment beyond what human hardware can process in real time? The answer then was LISP and Prolog running on avionics hardware. The answer now is a network of agents reading convergence signals across a sector in the fluid phase, surfacing the acceleration curve before the dominant design becomes legible to anyone without the sensor array.

The sound barrier of human evolution is not a technological event. It is an information event. We do not transcend our evolutionary limits by building smarter machines. We transcend them by building systems that read the patterns our three-pound brains cannot hold simultaneously. The next generation of investing is not about picking better companies. It is about having a better map of where the curve is going before the curve itself knows.

The optionality is still cheap. The second derivative is positive but early. The third derivative is approaching.

Three questions worth sitting with this weekend:

If the military funded the mathematics that created modern AI, and AI is now being used to read innovation curves across every sector of the economy, what does it mean that most investors are still making decisions with tools Shannon would have recognized as pre-information-theoretic?

The Hellfire R9X replaced Dresden through forty years of precision engineering, shrinking the footprint while increasing the accuracy of each action. What happens to the industries that still operate like Dresden when the same deflationary precision force arrives at scale?

Every dominant design in history was invisible until the moment it became obvious. The people who could read the second derivative before the inflection point captured the transition. What would you need to see right now to know the third derivative is arriving in food, health, or agriculture before anyone else does?

Creative Destruction covers the innovation forces reshaping food, health, energy, and the broader economy. If you found this useful, share it with someone who would argue with it over dinner.